My Works

Enterprise-Grade Kubernetes CI/CD System

Overview

Built a production-ready Kubernetes CI/CD pipeline from scratch using industry best practices. This comprehensive project demonstrates the evolution from rapid prototyping to enterprise-grade infrastructure that is scalable, secure, and maintainable for multi-application deployments.

Project Philosophy

Unlike my previous two projects which were rapid prototypes focused on "build fast, break things, learn", this project follows a gradual, iterative approach that simulates professional working environments, focusing on systematic improvements and best practices at each stage.

Key Highlights

- 3-node bare-metal Kubernetes cluster (1 control plane, 2 workers)

- GitOps workflow with ArgoCD for declarative continuous delivery

- Multi-environment architecture (dev, staging, production)

- Reusable GitHub Actions CI workflow templates

- Comprehensive security policies and enforcement

- Cloudflare Tunnel integration for secure public access

- Complete infrastructure validation pipeline

Architecture Components

Infrastructure Layer

- Kubernetes Cluster: 3-node bare-metal setup with Flannel CNI

- Load Balancer: MetalLB for external IP allocation

- Ingress Controller: Nginx for traffic routing

- Container Runtime: Containerd with systemd cgroup driver

- Cloudflared: Cloudflare Tunnel integration for secure public access

CI/CD Layer

- Source Control: GitHub with branch protection

- CI Pipeline: GitHub Actions with reusable workflows

- CD Pipeline: ArgoCD with automated sync policies

- Container Registry: Docker Hub for image storage

Security Layer

- Pod Security: Non-root containers, read-only filesystems

- Network Policies: Restricted pod-to-pod communication

- RBAC: Role-based access control for service accounts

- Policy Enforcement: Conftest with custom OPA policies

Deployment Strategy

- Configuration Management: Kustomize overlays for environment-specific configs

- Service Mesh: Multi-environment namespace isolation

- Public Access: Cloudflare Tunnel (cloudflared sidecar)

- Zero Downtime: Rolling updates with pod disruption budgets

Complete CI/CD Flow

End-to-end CI/CD flow from code commit to production deployment

CI Workflow Details

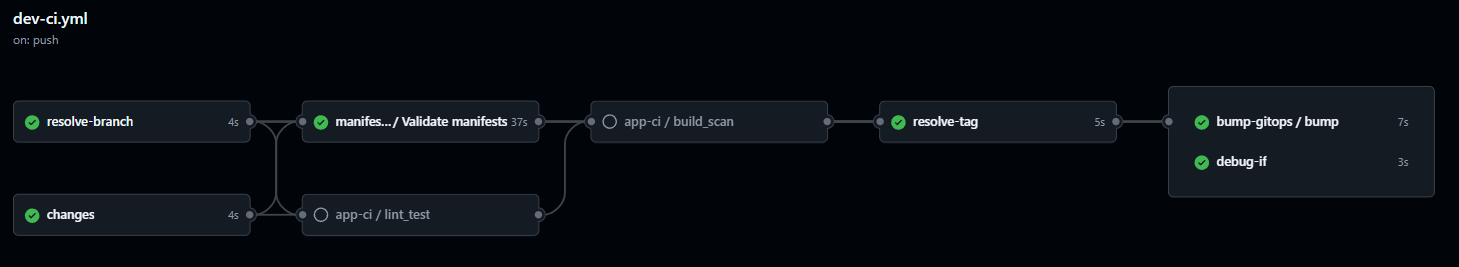

GitHub Actions CI workflow with validation, testing, and deployment stages

Intelligent CI Pipeline Orchestration

The CI workflow automatically detects changed files and executes appropriate workflows based on the type of changes. This app-specific workflow (dev-ci.yml) orchestrates calls to reusable workflows, ensuring efficient resource usage and fast feedback loops.

Complete Workflow Code (dev-ci.yml)

name: Dev CI (App + Manifests + GitOps Bump)

on:

push:

branches: [ "dev", "stage", "prod" ]

paths:

- "src/**"

- "public/**"

- "package.json"

- "Dockerfile"

- "manifests/**"

- "policy/**"

pull_request:

branches: [ "stage", "prod" ]

paths:

- "manifests/**"

- "policy/**"

permissions:

contents: write

packages: write

security-events: write

jobs:

changes:

runs-on: ubuntu-latest

outputs:

app: ${{ steps.filter.outputs.app }}

manifests: ${{ steps.filter.outputs.manifests }}

steps:

- uses: actions/checkout@v4

- uses: dorny/paths-filter@v3

id: filter

with:

filters: |

app:

- 'src/**'

- 'Dockerfile'

- 'package.json'

manifests:

- 'manifests/**'

- 'policy/**'

resolve-branch:

runs-on: ubuntu-latest

outputs:

target_branch: ${{ steps.resolve.outputs.target_branch }}

steps:

- id: resolve

run: |

TARGET_BRANCH="${{ github.event.pull_request.base.ref || github.ref_name }}"

echo "target_branch=$TARGET_BRANCH" >> $GITHUB_OUTPUT

app-ci:

needs: [changes, resolve-branch]

if: ${{ needs.changes.outputs.app == 'true' &&

needs.resolve-branch.outputs.target_branch == 'dev' }}

uses: nishanau/ci-cd-templates/.github/workflows/ci-app.yml@main

with:

image_name: nishans0/next-portfolio

context: .

dockerfile: ./Dockerfile

push_image: true

run_tests: true

secrets:

DOCKERHUB_USERNAME: ${{ secrets.DOCKERHUB_USERNAME }}

DOCKERHUB_TOKEN: ${{ secrets.DOCKERHUB_TOKEN }}

manifests-ci:

needs: [changes, resolve-branch]

if: ${{ needs.changes.outputs.manifests == 'true' }}

uses: nishanau/ci-cd-templates/.github/workflows/ci-manifests.yml@main

with:

overlay_path: manifests/overlays/${{ needs.resolve-branch.outputs.target_branch }}

policies_path: policy

kubeconform_flags: "--strict --ignore-missing-schemas"

secrets: inherit

bump-gitops:

needs: [app-ci, manifests-ci, resolve-branch]

uses: nishanau/ci-cd-templates/.github/workflows/ci-gitops-bump.yml@main

with:

gitops_repo: nishanau/NextJSPortfolioSite

gitops_path: manifests/overlays/${{ needs.resolve-branch.outputs.target_branch }}/kustomization.yaml

image_name: docker.io/nishans0/next-portfolio

secrets:

gitops_pat: ${{ secrets.GITOPS_PAT }}Workflow Jobs Breakdown

Job 0: Change Detection

First step analyzes the commit to determine which workflows need to run:

- App Changes: Detects modifications to

src/**,Dockerfile,package.json - Manifest Changes: Detects changes to

manifests/**,policy/** - Smart Execution: Only runs necessary workflows, skipping irrelevant checks

Job 1: App CI (Build & Publish)

- Runs linting and unit tests for code quality

- Builds multi-stage Docker image with optimizations

- Performs security vulnerability scanning

- Pushes image to Docker Hub with SHA-based tag (

sha-{commit}) - Environment: Primarily runs on

devbranch

Job 2: Manifests CI (Infrastructure Validation)

- YAML linting for syntax validation

- Kubeconform schema validation against Kubernetes API

- Conftest policy enforcement (custom OPA/Rego rules)

- Kube-score best practices analysis

- Checkov IaC security scanning with SARIF output

- Environment: Runs on all branches

Job 3: Tag Resolution & GitOps Bump

- Dev: Uses current commit SHA after successful app build

- Stage: Copies verified tag from dev overlay (promotion flow)

- Prod: Copies validated tag from stage overlay

- Commits tag change to trigger ArgoCD sync

Environment-Specific Behavior

Development (dev branch)

- Trigger: Push to

dev - Tag Format:

sha-{commit-hash} - Purpose: Rapid iteration and testing

Staging (stage branch)

- Trigger: Merge from

dev - Tag Source: Latest tag from

devoverlay - Purpose: Pre-production testing with stable builds

Production (prod branch)

- Trigger: Merge from

stage - Tag Source: Latest tag from

stageoverlay - Purpose: Production deployment with battle-tested images

Tag Bump Decision Matrix

Dev Branch

- App code only: ✅ Builds → ✅ Updates tag → ✅ Deploys

- Manifests only: ✅ Validates → ❌ No tag update

- App build failed: ❌ No tag update → ❌ No deploy

Stage / Prod Branch

- Promotion: ✅ Copies upstream tag → ✅ Deploys

- Policy validation failed: ❌ Blocks deploy

- Emergency rollback: Manual tag edit → ArgoCD auto-syncs

Key Workflow Characteristics

- Immutable Images: Once built in dev, same image promotes through environments

- Fail-Safe Design: Any validation failure blocks deployment

- Audit Trail: Git history tracks all tag changes and deployments

- Rollback Support: Manual tag edits enable instant rollbacks via ArgoCD

- Zero Downtime: Rolling updates ensure continuous availability

GitOps Tag Propagation Flow

# Development → Staging → Production

1. Dev: Build new image

- Tag: sha-abc123

- Push to Docker Hub

- Update: manifests/overlays/dev/kustomization.yaml

2. Stage: Promote verified build

- Read tag from: manifests/overlays/dev/kustomization.yaml

- Copy tag: sha-abc123

- Update: manifests/overlays/stage/kustomization.yaml

3. Prod: Deploy battle-tested image

- Read tag from: manifests/overlays/stage/kustomization.yaml

- Copy tag: sha-abc123

- Update: manifests/overlays/prod/kustomization.yaml

✨ Same image (sha-abc123) deployed across all environmentsImplementation Details

Cluster Setup

- Disabled swap and configured kernel parameters

- Installed containerd as container runtime

- Configured systemd cgroup driver for compatibility

- Initialized control plane with custom pod network CIDR

- Deployed Flannel CNI for pod networking

- Joined worker nodes using secure tokens

GitOps with ArgoCD

- Automated sync policies for hands-off deployments

- Multi-environment management (dev/stage/prod namespaces)

- Self-healing capabilities for drift detection

- Rollback support for failed deployments

- Health status monitoring and notifications

Multi-Environment Architecture

manifests/

├── base/ # Base resources

│ ├── deployment.yaml

│ ├── service.yaml

│ ├── pdb.yaml

│ └── sa.yaml

└── overlays/

├── dev/ # Development environment

├── stage/ # Staging environment

└── prod/ # Production environmentReusable CI Workflows

- Validation: YAML linting, schema validation

- Testing: Policy checks with Conftest

- Building: Docker multi-stage builds

- Publishing: Tagged images to Docker Hub

- Deployment: ArgoCD sync triggers

Security Implementation

- Pod security contexts (non-root, read-only FS)

- Network policies for traffic control

- RBAC with least-privilege service accounts

- Automated policy enforcement with Conftest

- Image scanning in CI pipeline

- Secret management best practices

Validation Pipeline

# YAML Lint

yamllint manifests/

# Schema Validation

kustomize build overlays/dev | kubeconform --strict

# Policy Testing

kustomize build overlays/dev | conftest test -

# Best Practices Check

kustomize build overlays/dev | kube-score score -Cloudflare Tunnel Integration

- Cloudflared deployed as sidecar container

- Automatic DNS management

- Zero-trust security model

- No firewall rule changes needed

- DDoS protection included

Key Learnings & Evolution

- Before: Quick deployments, minimal validation

- Now: Comprehensive testing, policy enforcement

- Result: Confidence in production deployments with full auditability

Technical Skills Demonstrated

- Kubernetes cluster administration

- Container orchestration and networking

- GitOps and declarative deployment

- CI/CD pipeline design and implementation

- Security policy creation and enforcement

- Infrastructure validation and testing

- Multi-environment configuration management

- Monitoring and observability setup

Live Deployment

Future Enhancements

- Prometheus & Grafana for monitoring

- EFK Stack for centralized logging

- Helm charts for package management

- Terraform for infrastructure automation

- Multi-cluster federation

- Service mesh (Istio/Linkerd) integration